Expanding

Academic Vocabulary with a Collaborative On-line Database

Marlise Horst, Concordia University

Tom Cobb, Université de Québec à Montreal

Ioana Nicolae, Concordia University

Contact information:

Marlise Horst, Asst. Professor

TESL Centre, Dept. of Education, Concordia University

Phone: (514) 848-2424 x 2010

Fax: 514) 848-4295

Email: marlise@education.concordia.ca

http://education.concordia.ca/~marlise/home/

Tom Cobb, Professor

Département de linguistique et de didactique des langues, Université de

Québec à Montreal

Phone: (514) 987-3000 x 2743#

Email: cobb.tom@uqam.ca

http://www.er.uqam.ca/nobel/r21270/cv/

Ioana Nicolae, ESL instructor

Continuing Education - Language

Institute, Concordia University

Phone: (514) 937-6925

Email: <i_nicola@alcor.concordia.ca>

Expanding Academic

Vocabulary

with a Collaborative

On-line Database

Abstract

A suite of on-line resources for learning vocabulary was designed following research-based principles. University students in an experimental ESL course used the resources — concordancing, dictionary, cloze-builder and hypertext tools — to study items on the Academic Word List (Coxhead, 2000). They also read academic passages and shared information about unfamiliar words they encountered by entering them into an on-line database. Classmates studied entered words, definitions and context sentences using a special self-quizzing option along with the other on-line tools. The research explored four areas: quality of student-produced database entries, entries by students of varying first language backgrounds, learning gains achieved in the course, and connections between use of specific tools and learning gains. An agenda for future research is laid out.

Introduction

In a 1997 review of research-informed techniques for teaching and learning L2 vocabulary, Sökmen issued the following challenge to software designers:

There is a need for programs which specialize on a useful corpus, provide expanded rehearsal, and engage the learner on deeper levels and in a variety of ways as they practice vocabulary. There is also the fairly uncharted world of the Internet as a source for meaningful vocabulary activities for the classroom and for the independent learner. (p. 257)

The quote is interesting in a number of ways.

One obvious point is that the Internet has become familiar territory for both

course developers and language learners in the years since 1997. But finding

computer activities for vocabulary learning that meet the criteria Sökmen

mentions still remains a challenge. First, vocabulary exercises available on

the web rarely "specialize on a useful corpus"; that is, there is no

apparent effort to identify words that occur frequently in the kinds of

language learners need to know or to draw on such a corpus to present words

targeted for learning in diverse sentence contexts. Too often designers simply

focus on any unusual items that happen to occur in a particular reading passage

— such that learners attend to items like wombat,

iguana and joey, but come away from the exercise not having worked with

frequent words like hunt, fly and hide (from a list of the 2000 most frequent words of English, West,

1953), or with words that would serve them well in reading academic texts like migrate, adapt and survive (from

the Academic Word List, Coxhead, 2000). Our review of over 50 sites for on-line

vocabulary learning identified only three that presented activities for

learning words that occur frequently in a specific corpus; these were the Compleat Lexical Tutor [1] the Virtual Language Centre [2] and

Haywood’s Academic Word List site [3]

(see endnotes for addresses of websites referred to in this article).

Opportunities for “expanded rehearsal,”

Sökmen’s second criterion, also tend to be limited. While learners can rehearse

in the sense of returning time and again to a computer exercise, software

rarely addresses the expansion aspect. That is, vocabulary activities do not

usually recycle targeted words in fresh contexts; this means they do not offer

learners the repeated, varied exposures that are needed to foster retention and

build transferable word knowledge

(Cobb, 1999). In our examination of over 50 vocabulary sites, we found

that words targeted for learning are usually presented in single sentence

contexts or else with no context at all. A number of the sites present reading

passages accompanied by vocabulary exercises but the exercises simply recycle

sentence contexts lifted from the passages rather than presenting the targeted

words in new ways. Of the many sites we explored, only a few offered

opportunities to see a new word in multiple sentence examples. We found one

where learners can encounter each of a limited (designer-selected) set of words

in two different sentence contexts, once in a multiple-choice question and then

again in a cloze activity [4]. Both the Complete

Lexical Tutor [1] and the Virtual

Language Centre [2] offer a great deal more: an on-line concordancer that presents

multiple examples of a selected word in use and a cloze builder that allows

learners to test word knowledge in a variety of contexts. Haywood’s Academic Word List site [3] also

features a cloze builder but entered texts must be no longer than 2400

characters and the program does not offer feedback on correctness of responses.

Gerry’s Vocabulary Database [5]

offers on-line concordancing and options to generate cloze and matching

activities – but only to customers willing to sign up and pay. In sum, our

review of over 50 sites indicates that on-line activities offering expanded

vocabulary rehearsal are the exception rather than the rule, and that in some

of the cases where such activities are available, there is considerable scope

for addressing issues of capacity, interactivity and access.

The lack of variety in practice activities

noted by Sökmen and their tendency to foster superficial processing persist as

well. Overwhelmingly, the computerized vocabulary activities we examined

required learners to recognize language rather than to produce it. Learners

identify synonyms, almost always in a multiple-choice or flash-card format

accompanied by little more than feedback (often but not always available) in

the form of a correct/incorrect verdict and encouragement to try the same

exercise again. Almost always, learners simply click on an answer option; at

most, learners are required to type (i.e. spell) single words.

In this paper, we describe and test

innovative on-line computer resources that supported vocabulary learning in an

experimental course for university-bound learners of English in Canada. Both

the design of the vocabulary course and the computerized support activities

address Sökmen's challenge in several ways. First, the approach was corpus-based

in that words targeted for learning in the course included the 800 items on the

University Word List (UWL), a list of word families found to occur frequently

and consistently in a large corpus of academic texts (Xue & Nation, 1984).

More recent sessions of the course have focused on Coxhead's (2000) updated and

more streamlined 570-word Academic Word List (AWL). The approach was also

corpus-based in another sense: the course materials included a set of academic

readings chosen by the students themselves and vocabulary from these readings

that they selected to study. The idea was to give students a role in

identifying words that were important to know. It was expected that this

mini-corpus, which reflected the reading and study interests of class members,

would serve as a useful source of infrequent and/or domain-specific words that

do not occur on the UWL or AWL.

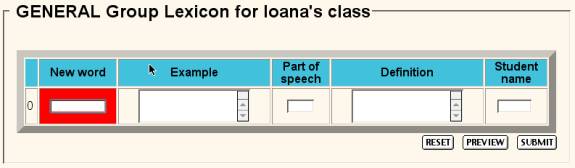

Secondly, an on-line word bank activity (see Figure 2 for sample entries) engaged

students in the more effortful processing that Sökmen mentions. Using the Word

Bank involved learners in identifying important words to study, entering

definitions along with example sentences into the bank, and using gapped

example sentences to review their own and their classmates' words — all

activities that engage them in deeper processing than is needed to complete

more typical computerized word activities such as multiple-choice synonym

recognition. The sound feature, which allows students to hear the entered words

and collocations, offers the learner the opportunity to process the information

in another modality. Using the concordancer available at the same site to study

the new words also encourages active processing of the type that can be

expected to foster retention. This study technique engages learners in

examining a new word as it appears in multiple sentence contexts located by the

concordancer. The learner attempts to guess the word's meaning, holds the

hypothesis in memory, and can confirm the guess by accessing the on-line

dictionary that is linked to the concordance interface (see Figure 4 for an

example of a concordance). Since the concordancer presents learners with many

different examples of a word in use, it also provides a basis for the expanded

rehearsal Sökmen is interested in. Learners can test their understanding of an

item like process across many

different sentence contexts that reveal its grammar functions (both noun and

verb) and its varied meanings (legal process, processed food, word processing,

etc.).

In addition to piloting these innovative ways

of assisting vocabulary learning, another important goal of the experimental

course was to challenge learners to study hundreds rather than mere dozens of

new words. Academic learners need to recognize the meanings of thousands of

English words in order to handle the reading requirements of university

textbooks effectively (Hazenberg & Hulstijn, 1996; Laufer, 1989, 1992), and

memory research reviewed by Nation (1982, 2001) suggests that learners can

acquire and retain knowledge of many more new word meanings than is usually

expected in language courses. These increased expectations were built into the

course design; we wanted to expose students to a large numbers of useful new

words and challenge them to increase their vocabulary size. But which words (in

addition to the AWL) are most useful for a diverse group of academic learners

to know? How could we identify a large number of useful words for students with

differing L1 backgrounds, L2 proficiency and academic objectives? The

collaborative on-line word bank software provided an answer by putting the

decision in the hands of the students. This computer tool would allow them to

select for themselves the words they would study in the course. The principle

underlying the inclusion of both a corpus-based list of frequent academic words

(the AWL) and words chosen from academic texts by the students themselves was

the goal of reaching the 95% known-word coverage criterion, a point identified

by Nation (2001) and others as the watershed between non-comprehension and

comprehension of a typical text. This research indicates that a reader who

knows the meanings of 95% of the words in a reading passage (i.e. 19 words in

20) has effectively become an independent reader; that is, he or she

understands the text well enough to be able to guess the meanings of remaining

unknown words from context. Work by Sutarsyah, Nation and Kennedy (1994)

suggests that the 95% criterion can be achieved for academic texts if readers

have knowledge of the following groups of words: the 2000 most frequent words

of English (presumably already known to our course participants), the words on

the AWL (targeted by the designers for study in the course), and some recurring

domain-specific words (to be identified by the students).

The course and the computer activities are

described in more detail below. Then questions about the usefulness of the

tools and the learning results are explored in a number of experiments.

Course design

The context for the research was an experimental vocabulary course for intermediate-level academic learners of English at a Canadian university. In early 2000, course designers began looking for ways to diversify the ESL curriculum that was largely devoted to developing academic writing skills. It was decided to pilot a number of alternative courses of which the vocabulary course was one. Since students were struggling with the vocabulary

Figure 1

Homepage for Academic Vocabulary Development,

an experimental ESL course

(and reading comprehension) sections of an

in-house proficiency test they had to pass before taking content courses at the

university, it was thought that a course focusing directly on academic

vocabulary might be of more use than the usual integrated reading and writing

course with soft-target objectives. The experimental course was offered for the

first time in the fall semester of 2000 (see Horst & Cobb, 2001 for a

report). Over the course of subsequent sessions in 2001 and 2002, the course

was revised and the software was improved and expanded to include additional

activities. This paper draws on data gathered in several of these sessions. The

description of the course begins with a look at how students contributed to the

creation of the reading and vocabulary materials they would study. Later we

turn to the activities they used to study AWL words and the items they had

selected from the readings.

Building a set of academic readings for the

course involved requiring students to buy (or locate on the internet) the

weekend edition of a quality newspaper (Toronto

Globe and Mail) and read two articles of their choice each week from its Focus section, a supplement that

features essays on a variety of topics written in a style that can be termed

academic.

Each week students prepared summaries of the

articles they had chosen to read; they also used dictionaries to look up

unknown words from these readings. They then each selected words they felt

would also be useful for their classmates to know and entered them in the

on-line Word Bank created by the second author. This provided a simple way of

sharing the valuable information gleaned in the individual word quests. Figure

1 shows the homepage for the most recent session of the course. The button for

Word Bank entry appears at the top of the middle column under Focus Activities.

Clicking on this button brings up the Word Bank (see Figure 2). At the top of

the Word Bank page is the data entry template which presents the student with

spaces for entering a word, an example of the word used in context, word class

information, a dictionary definition, and the contributor's name. Each week the

students were required to enter five new words they had encountered in their

newspaper reading in the Focus Word

Bank. A sample of three Focus Word

Bank entries made in the most recent course also appears in Figure 2.

In addition to the Focus texts, students also read texts related to their domains of

study. Students with similar study interests were grouped together; for

instance, in the 2002 version of the course, the class was divided into four

groups around the domains of business, computer studies, science and

humanities. Group members were responsible for selecting suitable subject area

texts and sharing them with others in the group. Words from these readings were

entered regularly into Specialist

Word Banks; the links to these appear in the third column of the homepage

(Figure 1). Words entered by students in the computers group such as chip, code and port show that

the Word Banks offered good opportunities to study domain-specific words; the

inclusion of dynamic, estimate and herd indicates that other, more general words in these Specialist readings were also of

interest.

Figure 2

Data entry template and sample entries to

collaborative on line database

Any claim that learning vocabulary with a

collaborative on-line database is effective rests on showing that students are

able to generate accurate and useful materials for their own learning.

Therefore we were interested in evaluating the quality of these

student-produced materials. We were also interested to see if learners provided

more informative Word Bank entries for their classmates to study when an

interactive feature was added. Contributing to the collaborative on-line Word

Bank had been an integral part of the course from the outset but in the summer

of 2002 a new activity was built in. This was the quizzing option (described in

detail below), which allows students to test their knowledge of the words

entered into the Word Bank by attempting to supply missing words in randomized

gapped versions of the student-entered example sentences. Our investigation of

the quality of the entries focuses on the example sentences students entered

before and after this addition. Thus the first research question is as follows:

1. What was the

quality of the context support for words entered in the on-line Word Bank, and

did the quality improve with the addition of the self-quizzing feature?

A second quality concern was the extent to

which the on-line word bank served the needs of different types of learners in

the group. We recognized that not every student would be interested in all of

every other student's word bank entries, but we reasoned that each student

would belong to a number of constituencies within the class that had common

vocabulary needs. For instance, if a commerce student was interested in a word

like tycoon, other students with

business interests might be curious about it too. Similarly, if a

French-speaking learner was unfamiliar with a word of Germanic origin like swivel, other Romance-language speaking

learners in the group might be unfamiliar with it as well. To determine whether

the collaborative on-line project was living up to its potential to offer

instruction tailored to individual needs, we identified two distinct first

language constituencies in the group, Asian vs. Romance language speakers, and

examined the words they entered in the on-line Word Bank. The Romance language

speakers were expected to enter fewer words of Latin and Greek origin since

they are able to exploit cognate knowledge for clues to meaning, a strategy not

available to Asian language speakers. Thus the second research question was as

follows:

2. To what extent did students of Asian and Romance language background

enter different types of words into the Word Bank?

The third and most important question

concerns learning. We were interested in the extent to which students acquired

new knowledge of the many words that were targeted in the course. As outlined

above, the vocabulary items learners studied came from the two main sources,

entries in the on-line Word Bank and the AWL. A list of AWL words as well as

the collaborative Word Banks created each week were accessible to students for

study on the class web page. The research question that addresses new word

learning reads as follows:

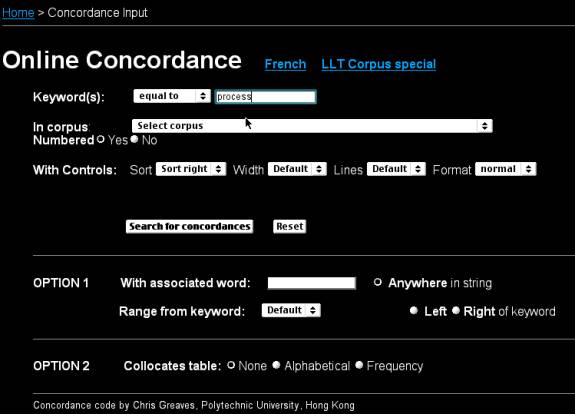

Figure 3

On-line concordance interface

3. To

what extent did learners increase their knowledge of vocabulary targeted for

study in the experimental course?

The course familiarized students with a

variety of research-based strategies for learning and retaining new vocabulary,

but we limited our investigation to five activities that involved interactive

on-line tools which in some way exploited corpus information -- all available at

class web page shown in Figure 1. The five activities were as follows:

examining concordance examples, consulting an on-line dictionary, reading

hypertext, using the quiz feature of the on-line Word Bank, and entering texts

into the cloze-passage maker.

The first three, concordancing, consulting a

dictionary, and reading hypertext, go hand in hand and can be categorized as

word discovery strategies. A student who concordances an unfamiliar word is

presented with multiple examples of the word drawn from large on-line corpora.

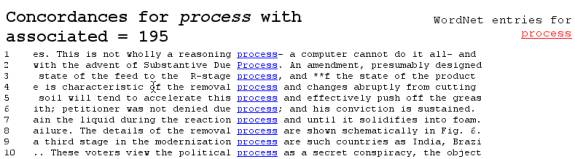

To concordance a word, the student types the word into the box labeled “Keyword(s)” as shown in Figure 4 where the

word process has been entered. The

learner then chooses one of 14 available corpora and clicks on “Search for

concordances”. The concordancer

searches the corpus to find every occurrence of the selected word and displays

them in a format that allows the user to see the many different instances of

the word in use. A sample of concordance output drawn on the Brown corpus (Francis

& Kucera, 1979) for the word process

is shown in Figure 4.

Figure 4

First 10 lines of concordance output for the word process drawn on the Brown corpus

If guessing the meaning from the concordance

output proves difficult, the student can access an on-line dictionary

definition by requesting a dictionary definition from WordNet (see upper right

corner of Figure 4). This dictionary feature is also available at the class

website independent of the concordancer.

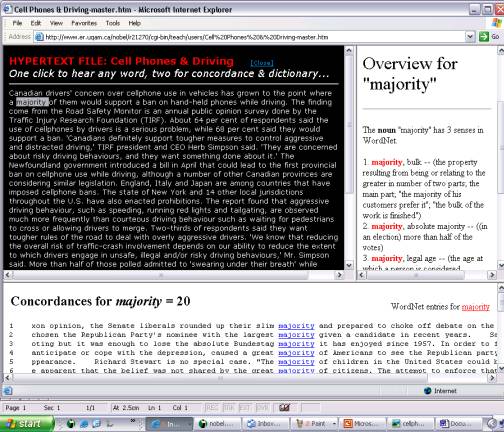

In addition, students had the option to read class texts (all of which

were available on-line) with the help of a third tool, the hypertext feature.

This tool transforms each word of any entered text into linked hypertext;

clicking on any word once allows the learner to hear the pronunciation of the

selected item. Clicking twice produces a concordance of the word that in turn

links to the on-line dictionary. An

example of a typical newspaper passage of the type used in the experimental

course appears in hypertext format in Figure 5 along with concordance and

dictionary support for the word majority.

The other two computer activities can be

termed practice strategies. The first of these involves using the quiz feature

of the on-line Word Bank: Once words and accompanying definitions and examples

have been entered into the Word Bank, students can create a personalized quiz

by first checking the boxes to the left of words they wish to study and then on

the “Quiz checked items” button. As shown in Figure 6, this produces a screen

where the example sentences are randomized and presented in a gapped format.

Students can fill in the sentences by choosing from a menu of answer options

that consists of the selected words.

Help is available in the form of the word class information and

definition that accompanies the entry. Once the quiz has been completed, the

student is shown a score (percentage of correct answers) and information about

which items need to be revisited.

Figure 5

Hypertext feature

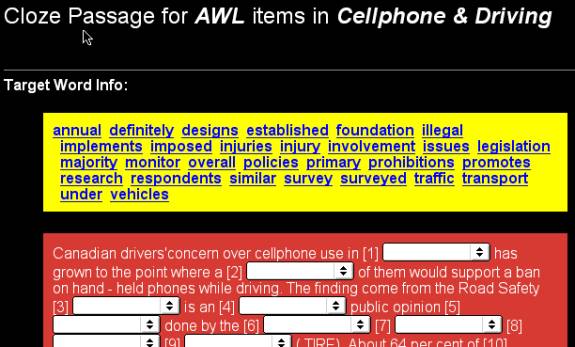

Finally, the fifth tool is the clozemaker. This

feature allows a student to enter a text that is then transformed into a gapped

passage where words of a selected frequency (1-1000 most frequent, 1001-2000,

AWL, or off-list) are missing. As with the Word Bank quiz, the learner fills in

a space by choosing the appropriate item from a menu that lists all of the

deleted words. This was presented to the students as a useful way to review AWL

words. An example with the same passage about cellphones used to create the

hypertext reading activity in Figure 5 is shown in Figure 7. Each of the

deleted items appears in the “Target Word Info” box at the top of the exercise.

Students who want to check their understanding of one of these items can click

on the word; this brings up a concordance along with a link to the on-line

dictionary.

Figure 6

Word Bank quiz (based on entries shown in Figure 2)

Figure 7

Clozemaker exercise with gapped AWL words

We were interested in assessing the extent to

which learners used the various computer tools on offer in studying the

vocabulary targeted for learning in the course. We also wanted to examine the

connection between students' use of the computer tools and any eventual word

learning outcomes that occurred in the course. These concerns prompted the

final research questions that read as follows:

4.

Which of the on-line activities were used most? To what extent were vocabulary

gains achieved in the course associated with use of particular ones?

In summary, the experimentation focused on

four different aspects of the computer-assisted course. First, we consider the

quality of the on-line word bank entries by examining the support for meaning

on offer in example sentences. Secondly, we explore the Word Bank’s potential

for addressing varying vocabulary needs in a diverse group of learners by

looking for differences in the kinds of words entered by Asian and Romance

language speakers. The third question addresses the core issue of the extent to

which new words were learned in the experimental course, while the fourth considers

use of the on-line study tools and looks for a connection to vocabulary growth

achieved in the course. In the next sections we describe the methodology and

results of the various experiments conducted to answer these questions,

beginning with a description of the participants.

Methodology

Participants

and Context

The 33 students who registered for the first

session of the experimental vocabulary course at a university in Montreal in

2000 represented a variety of first language backgrounds. About two thirds of

the group were speakers of Asian languages (Chinese and Vietnamese) and about

one third had Romance language background (Quebec French, Spanish or

Portuguese). There were also Arabic, Farsi and Russian speakers in the group.

There was a range of abilities in the class but they can be generally termed

intermediate-level learners. Most had been admitted to the university on the

condition that they take courses to improve their English. The experimentation

reported in this paper also draws on data from two other intact groups. One of

these was similar in character to the original group and consisted of 14

students who took the experimental vocabulary course in the fall of 2002.

Another group of 28 ESL students also used the on-line tools in a reading-based

course at another Montreal university in the summer of 2002. They were

high-intermediate learners with French as their first language. With each

session of the course, new on-line study tools were developed and tested; in

the most recent session in the fall of 2002, students had access to all five

tools for the first time.

Procedures and Results

The first two research questions pertain to

the Word Bank itself: the usefulness of information offered in the

student-created materials and the extent to which they reflected the needs of

learners with different first language backgrounds. Answering these questions

involved examining sample entries in detail. Answering the third and fourth

questions about word learning and strategy use involved the administering tests

of vocabulary knowledge and a questionnaire. These procedures and the

experimental findings are discussed in detail below, beginning with the

investigation of the context sentences students entered in the Word Bank.

Word

Bank entries — investigating quality

Procedure

To investigate the quality of students'

example sentences and the effect of adding the study option, we randomly

selected two sets of 60 sentences that were entered into the Word Bank during

an 8-week course in the summer of 2002. One set sampled entries made during

Week 2 of the course, a point at which students were judged to be fully

familiar with using the on-line tools to enter items into the Word Banks. The

second came from entries made during Week 5 just after the new feature — the

self-quizzing option — had been added.

To assess the extent to which an example

sentence supported the meaning of the target word, we followed a method

inspired by Beck, McKeown and McCaslin (1983). First, we deleted the target

words from the 120 sentences and asked four native speakers to supply the

missing items. These responses were then evaluated by two native speaker

raters. For instance, four responses to the gapped version of the sentence

"Punishments which are swift and sure are the best ________," were kind, answer, deterrent and deterrent. This sentence had been

entered by a student as a context sentence for the word deterrent. Responses that bore no clear resemblance to the meaning

of the target word (kind and answer) were awarded a score of 0 points

while the two exact matches were each awarded a score of 1 point. In this case,

the total score for the example sentence was 2 points (0 + 0 + 1 + 1 = 2).

Responses that approached the meaning of the gapped word such as children (target = offspring) or tremble

(target = shudder) were awarded .5

points. Thus the possible supportiveness score given an example sentence ranged

from 0 points (no responses resemble the meaning of the target) to 4 (all four

responses match the target exactly). There was a large amount of agreement in

the scores awarded by the two raters (inter-rater reliability coefficient =

.92). Scores assigned by the two raters were added together, resulting in a

single score for each example sentence that ranged from 0 to 8 possible points.

Then the two sets of 60 scores (from Weeks 2 and 5) were tested for differences

using a t-test for unmatched samples.

It was expected that using the Word Bank to study for class tests would prompt

students to enter more informative sentences with the addition of the new

interactive quizzing option in Week 5.

Results

As Table 1 shows, the mean rating for all 120 entries amounted to 2.46 (SD = 2.2). The general picture emerging from this analysis is one of useful example sentences that support the meaning of the target words. Once the mean score of 2.46 is halved to arrive at the average score awarded by a single rater, the result (1.13) is just over the score attained when one of the informant responses matches the target exactly (1 + 0 + 0 + 0 = 1), or if two of them respond with a word that are similar in meaning to the target (.5 + .5 + 0 + 0 =1). Thus the mean score indicates that there were clues to meaning on offer in the sentences that one or two respondents were able to exploit successfully, although there was clearly also considerable variability.

The predominance of informative sentences is

confirmed in counts of successful and unsuccessful guesses. For 94 of the 120

gapped example sentences (78.33%), at least one of the raters was able to

provide a response that either matched the target or closely approximated its

meaning. Only 26 (21.66%) of the sentences were given a score of 0 points by

both raters. In other words, in over three quarters of the sentences, there

were useful clues to meaning on offer that one or more of the respondents

exploited successfully. These findings support the results of the earlier

investigation of Word Bank entries (Horst & Cobb, 2001); that study also

found that the quality of example sentences (and definitions) on offer in the

student-produced on-line study materials was high. It is interesting to note

that students occasionally complained about spelling or grammar errors they

spotted in the Word Bank entries but to our knowledge, none have

complained about the semantic information on offer.

Table 1

Mean quality ratings of examples sentences (n = 60)

|

|

Week 2 |

Week 5 |

||

|

Mean |

2.21 |

2.70 |

|

|

|

SD |

2.29 |

2.14 |

|

|

t

= 1.16, p > .05

Table 1 shows that mean ratings amounted to

2.23 (SD = 2.26) in Week 2 of the course and

2.69 (SD = 2.14) after the new feature was added. The increase in mean

ratings suggests that students did indeed become more interested in entering

examples that would serve them and their classmates well in the self quizzing

activity. However, the t-test

indicated that this difference was not significant. A similar hint of improved

quality over time was found in a similar analysis of context sentences in the

2000 session (for details, see Horst & Cobb, 2001) but there too,

differences were not statistically significant. Since students are probably

lifting context sentences directly from the reading passages rather than

carefully constructing informative sentences to support the meanings of entered

words, it is not surprising that the quality of the sentences remained fairly

consistent over time. Research by Zahar, Cobb and Spada (2001) indicates that

such naturally occurring sentences appear to support word meanings rather well,

thus the more pertinent question in the case of the Word Bank entries may have

been: Did the students supply enough

of the language surrounding an entered word to offer useful clues to meaning?

The results of the experiment indicate that the answer was yes.

Word

Bank entries – investigating individual use

Procedure

To determine whether students of different L1

backgrounds were using the on-line resources in different ways to meet their

varying vocabulary needs, we examined words entered into the Word Bank by

students of Asian and Romance language background. To compare the words that

learners in the two groups entered, we prepared two corpora of 300 words each.

The Asian corpus consisted of 300 items entered in the Word Bank during the

first three weeks of the original course offered in 2000 by 14 learners whose

first language was Chinese or Vietnamese.

The Romance corpus consisted of the 300 items entered by 12 French,

Spanish and Portuguese speakers. Each corpus was analyzed using lexical

frequency profiling software (VocabProfile adapted by Cobb, 2000 from Laufer

& Nation, 1995). This program sorts the words of any entered text into the

following categories: words on the list of the 1-1000 most frequent word

families1, words on the 1001-2000 most frequent list (West, 1953),

items on the Academic Word List (Coxhead, 2000), and "off-list" words

that do not occur on any of the lists.

A chi-square test was used to determine whether there were distinct

patterns in the two sets of category data. We hypothesized that the proportion

of entries from the AWL band (which contains many words of Greco-Latin origin)

would be larger in the Asian group than in Romance group.

Results

The results shown in Table 2 show that this

hypothesis was borne out. The number of AWL words entered by students with

Asian language background (18 %) exceeded the number of Romance language

entries in this category (11%). On the other hand, the Romance group entered

more high frequency words than the Asian group. The overall pattern of entries

in the two data sets was found to differ significantly (c

=

13.83, df = 3, p < .05).

Table 2

Distributions by frequency of 300 words entered by two L1-based groups

|

|

1-1000 |

1001-2000 |

AWL |

off-list |

|

%

in Asian group |

7 |

5 |

18 |

69 |

|

%

in Romance |

18 |

9 |

11 |

61 |

The symmetrical differences between the two

groups are especially striking if the two high frequency categories (entered

words in the 1-1000 and 1001-2000 most frequent bands) are taken together as

shown in Figure 8. There we see that a total of just 12% (7 + 5) of the Asian

entries were highly frequent English words but more than twice as many of the

entries made by Romance language speakers are words from this category. Over a

quarter (18 + 9 = 27%) of all the words they entered were on the list of the

2000 most frequent English word families. Many of the most common English words

are of Anglo-Saxon origin and have no cognate equivalents in Romance languages;

this makes them more likely

Figure 8

Distributions of 300 entered word in two

L1-based groups

to be unfamiliar to Romance speakers than

less frequent Latin-based English words such as facilitate or maximize.

The occurrence of common words of Germanic origin like flew, storm and height on the list of Romance entries

suggest that learners in the group were indeed directing their attention to

non-cognates. It is clear that the two groups were looking up different types

of words, and there is reason to believe that both groups were well served by

the word learning opportunities offered in the collaborative on-line Word Bank.

The bar chart also shows that the majority of the words students in both groups

entered was in the low frequency "off-list" zone (69% in the Asian

group and 61% in the Romance group); this is the category of words we expected

the Word Bank would be used for.

Vocabulary

learning – investigating growth

To answer the question about word learning

gains, we report outcomes of two versions of the course, the original 2000

session and the most recent 2002 session. As will become evident, the earlier

results identified a need for more refined testing. A measure designed to be

more sensitive to gains was piloted in the later session.

Procedure

- fall 2000 session

To determine how much students had learned in the first (fall 2000) session of the course, we used the Vocabulary Levels Test (Schmitt, 2000; Schmitt, Schmitt & Clapham, 2001) to measure students' receptive vocabulary sizes at the beginning and end of the course. This research instrument is designed to assess receptive knowledge of words sampled from lists of the 2000, 3000, 5000 and 10,000 most frequent English word families and the AWL. Testees are asked to select meaning equivalents for target words in a multiple-choice format; a sample question cluster for words at the 3000 frequency level is shown in Figure 9. Participants were tested on their knowledge of words at the 3000, 5000 and 10,000 and AWL levels. The 2000 level section was omitted at the outset of the course on the assumption that the vocabulary that university learners would select to study in the course (the on-line Word Bank entries) would be infrequent words2. Vocabulary learning gains on the four tested levels were determined by calculating the differences between learners' pre- and post-test scores.

|

1. belt 2. climate 3. executive 4. notion 5. palm 6. victim |

__ idea __ inner surface of your hand __ strip of leather worn around the

waist |

|

|

|

Figure 9

Sample question cluster, Vocabulary Levels Test, Version 1 (Schmitt,

2000)

Results

- - fall 2000 session

Means scores on the Vocabulary Levels Test for the 28 participants who took the test at the beginning and end of the 2000 session are shown in Table 3. The maximum score possible in each section of the test was 30. While the general picture is largely one of growth, it is also evident that some of the gains are very small. It seems likely that some increases in knowledge of words that occurred in the group were not captured by the test instrument that registers either success or failure in matching a word to its definition but no knowledge increments between these two poles. Statistical analysis of the data (ANOVA and post-hoc t-test) showed that the only significant pre-post difference in means was on the AWL section of the test (t = 2.62; p < .05); other differences were not significant. Although the gain of about two new words in the AWL category may appear rather minor, if we extrapolate this result to the entire word list, we see that learners achieved a more substantial amount of growth. The gain of 1.73 words in 30 represents a growth rate of 5.8%; when this figure is applied to all 570 words on the AWL, we arrive at a gain figure of about 33 new words (.058 x 570 = 33.06). As mentioned, the testing did not tap increases in partial knowledge that are also likely to have occurred.

Table 3

Pre- and post-test means on the Vocabulary Levels Test by frequency

section (n = 28)

|

|

3000 |

5000 |

10,000 |

AWL |

|||||||||||||

|

|

pre |

post |

pre |

post |

pre |

post |

pre |

post |

|||||||||

|

Mean |

24.61 |

24.86 |

20.86 |

20.77 |

9.68 |

9.77 |

24.77 |

26.50 |

|

||||||||

|

SD |

3.96 |

3.37 |

4.84 |

5.57 |

5.21 |

6.52 |

3.94 |

3.63 |

|

||||||||

The finding of an increase in knowledge of AWL items is not surprising given the attention they received in class activities. Students participated in a variety of on- and off-line activities to support learning of these words and studied for weekly quizzes. One probable explanation for the finding of little growth in knowledge of words in non-AWL frequency zones — zones that the Word Bank activities were specifically designed to address — is that the Vocabulary Levels Test was not sensitive enough due to the sampling methods used in its construction. For instance, the test evaluates a learner's knowledge of just 30 of the words in the 5000-10,000 most frequent band; thus, a learner who used the Word Bank to acquire new knowledge of some of the thousands of words in this frequency range stands a low chance of being tested on those items on the 30-item test. In other words, the testing offered students limited opportunities to demonstrate new knowledge of infrequent words. For these reasons, in the 2002 session of the course we opted for a different testing method, one that would specifically target words that had been entered into the Word Bank.

Procedures

-- fall 2002 session

Developing measures more sensitive to growth

achieved through the Word Bank activities for use in the investigation of the

2002 session involved selecting three magazine texts to be read in the course

(in addition to student-selected Specialist

readings). Since the investigation Romance and Asian entries reported above

indicated that most of the words students selected for entry into the Word Bank

were off-list items (words that did not occur on lists of the 2000 most

frequent English word families and the AWL), we decided to use off-list words

that occurred in the magazine readings as test targets on a pre-test. We

expected that when students eventually read the texts and entered words into

the Word Bank, entries would include some of these pre-tested words. We would

then be able to administer a post-test at the end of the session that would

allow us to compare students' knowledge of words they had entered into the Word

Bank (and studied using tools available on the class website) to their

knowledge of words that had not been entered. The procedure was as follows: at

the outset of the session, the students were asked to rate their knowledge of a

random sample of 150 off-list words that occurred in the magazine readings, 50

from each of the three texts. The

ratings instrument presented the students with the words and required them to

indicate whether they knew the meaning of an item by choosing one of three

options, YES (sure I know it), NS (not sure) or NO (I don't know it), as shown

in Figure 10. This is an adaptation of a technique developed and tested by

Horst and Meara (1999). In later weeks, students read the pre-selected texts

along with the other course readings and entered unfamiliar words into the Word

Bank as usual. As it happened, 21 of the 150 pre-tested words were eventually

entered into the Word Bank by students in the course and so made available for

study by all. This meant that by the

end of the course it was possible to ask students to rate their knowledge of

the pre-tested words again and assess the learning effects of the Word Bank

activities by comparing knowledge ratings for the 21 entered words to ratings

for the remaining 129 words that had also been encountered in course readings

but were not entered in the Word Bank.

In addition to the ratings instrument -- a

self-assessment measure that allows a possible role for over-estimation of

gains -- the experiment also included

an individualized end-of-course test that required students to demonstrate

knowledge of words. Creating this test involved identifying 10 words that met

the following criteria: All 10 words were items a participant had rated NO (not

known) at the beginning of the course; 5 of these had eventually appeared in

the Word Bank while the remaining 5 had not. The test (based on Wesche and

Paribakht's Vocabulary Knowledge Scale,

1996) required students to produce a synonym of a target word and if possible,

to also incorporate it in a meaningful sentence. Sample questions from the

self-rating measure and the demonstration test are shown in Figure 10. Pre- and

post-test means on the two measures were tested for differences using t-tests for paired data.

|

RATINGS MEASURE Instructions: Circle YES if

you are sure you know the meaning of the word. Circle NS if you have an idea about the

meaning but you are not sure. Circle NO if you do not know the word. Don’t worry if you don’t know

some of the words. Just answer as honestly as possible. Example: room YES NS NO |

DEMONSTRATION

MEASURE Instructions: What do you know about these words? Please circle 1, 2, or 3 and complete. venom 1. I don’t know what this word means. 2. I am not sure. I think it means …………………………… (Give the

meaning in English, French or your language.) 3. I know this word. It means …………………………… and I can use it

in a sentence. (Write the sentence.) ……………………………………………… |

|

|

1.

replenish 2.

thrive 3.

credibility 4.

reefs 5.

vanish 6.

equator |

YES NS

NO YES NS

NO YES NS

NO YES NS

NO YES NS

NO YES NS

NO |

|

Figure 10

Sample items on ratings measure (left) and demonstration measure

(right)

Results

- fall 2002 session

In analyzing the ratings data, we considered

percentages of words students rated YES (sure I know it) before and after

meeting all 150 words in their reading. As outlined above, 21 of these words were entered into the Word Bank;

this allowed us to compare gains in knowledge of words made available for study

using the on-line tools to gains made on words simply met in reading the

passages. Means shown in Table 4 indicate that at the outset, over half

(53.37%) of the words that were not eventually selected for entry into the Word

Bank were rated YES, while the mean for entered words was substantially lower

(39.32%). The lower knowledge score for entered words is not surprising; it

shows that in selecting unfamiliar words for entry into the Word Bank, students

identified items that tended to also be unfamiliar to their classmates.

Pre-post comparisons of mean percentages of

words rated YES indicated that all 14 participants knew more words in both

entered and un-entered categories at the end of the course than they had at the

beginning. Knowledge of the 129 words students met in reading the selected

passages but were not entered in the Word Bank increased significantly from

about 53% to 69%, a gain of roughly 16 % (t = 9.21, p < .0001); this small

gain is consistent with accounts of word learning through naturalistic exposure

in conditions where the cognitive processing demands are relatively low (Laufer

& Hulstijn, 2001). Knowledge of the 21 items that were entered in the Word

Bank increased more substantially, from around 39% at the beginning of the

course to about 77% by the end -- an increase of over 37% and more than double

the gain made on the un-entered words. This difference was significant (t = 10.61, p < .0001). The change is especially striking since the mean

knowledge level of these words was initially lower than that of the un-entered

words and the endpoint higher. These results are shown in Table 4.

Table 4

Pre- and post-test means on ratings measure in percentages, un-entered

vs. entered words

|

|

un-entered

(n

= 129) |

entered

(n

= 21) |

||

|

|

pre |

post |

pre |

post |

|

Mean |

53.37 |

69.09 |

39.32 |

76.65 |

|

SD |

11.43 |

12.36 |

15.67 |

14.49 |

Performance on the second test that required

learners to demonstrate knowledge of words they had identified as not

known (i.e. rated NO) at the outset of the

course was evaluated by tallying numbers of words that students were able to

either define accurately or define accurately and use in a correct sentence

(see answer formats 2 and 3 in Figure 10). Then success rates for words that

were not entered in the Word Bank were compared to those for words that had

been entered. The mean percentage of words for which knowledge was successfully

demonstrated amounted to 17.5% in the case of the 5 un-entered items, while the

figure for the 5 entered items was nearly double at 31%. A t-test for correlated samples (two-tailed) indicated that this

difference narrowly missed significance at the .05 level (t = 2.04, p = .06). These

results appear in Table 5. The findings of this demonstration test provide

important substantiation for the gains registered on the ratings instrument.

The doubled gain for entered words found here corresponds to the doubled gain

found there; thus there is reason to assume that gains reported on the ratings

measure reflect demonstrable increases in knowledge of the meanings of words

rather than optimistic over-estimations.

Table 5

Means in percentages for successfully demonstrated knowledge of

previously unknown words (n = 14)

|

|

un-entered |

entered |

|

Mean |

17.50 |

30.71 |

|

SD |

10.09 |

21.56 |

Keys

to success - The strategies question

Procedure

Students' use of the five resources, the

on-line dictionary, the concordancer, the Word Bank quiz feature, hypertext

reading and the cloze maker, was assessed in a survey administered at the end

of the 2002 session. Students were asked to indicate how often they used each

tool by choosing one of the following options: never, once or twice, fairly often, very often and almost always. Each answer was assigned

a number value ranging from 0 for never

to 4 for almost always.

Results

Mean ratings indicated that the most used

strategies were consulting the on-line dictionary directly (M = 2.43, SD = .85) and using the Word Bank quiz feature (M = 2.43, SD = .84). The group means place use of these two strategies in the

fairly often to very often range. Results for all five strategies are shown in

Table 6. An ANOVA for matched samples (df

= 4) and post hoc Tukey test indicated significant differences (p < .05) between the two most used

features (dictionary and Word Bank quiz) and the two least used features

(concordance and hypertext). Other comparisons did not deliver significant

differences. The finding that the dictionary was popular is not surprising. In

the weekly task of entering five words into the Word Bank, pasting in WordNet

definitions was probably an appealing alternative to manually typing in

definitions from a paper dictionary. The attraction of the Word Bank quiz is

also clear. No doubt students used this resource as they studied for the

midterm and final tests on Word Bank items. Mean use of the clozemaker, which

approaches the “fairly often” level (M =

1.79. SD = .80), was seen as

unexpectedly high by the course teacher who reported that she had directed

relatively little attention to this option in class.

Table 6

Mean ratings of five on-line activities (highest possible rating = 4)

|

|

On-line

Dictionary |

Concordance |

Word

Bank Quiz |

Hypertext

Reading |

Cloze

Maker |

|

|||||

|

Mean |

2.43 |

1.57 |

2.36 |

1.57 |

1.79 |

||||||

|

SD |

.85 |

.65 |

.84 |

1.16 |

.80 |

||||||

In a study of the 2000 session, where the use

of three strategies (on- and off-line dictionary use and concordancing) were

evaluated, a near significant relationship was found between gains made on AWL

words in the course and use of the concordancer. Even though use of this

strategy was not particularly high, the multiple regression analysis suggested

that concordancing made a unique contribution to variance in scores (Horst

& Cobb, 2001). A similar analysis of gains in knowledge of words entered

into the Word Bank in relation to use of the five on-line strategies was

performed on the data from the 2002 session, but no significant relationships

were found. Of the five variables, the only one that pointed to a possible

connection to word gains was use of the Word Bank quiz (r = . 39, p = .09). The

small size of the participant group (n

= 14) may explain the lack of clear findings. Also, since students were free to

study the words as they pleased, other more traditional ways of studying may

have obscured the contribution of the on-line tools.

Conclusion

In sum, we believe that the tools

investigated in this study make a promising start on the program outlined by

Sökmen for computer assisted vocabulary learning. We took as a point of departure Sökmen’s (1997) challenge

to develop vocabulary acquisition tools that:

- are based on a corpus,

- expand and vary opportunities for rehearsal,

and

- engage the learner at a deep level.

We have tried to operationalise these ideas

in one of the several ways this might be done. To itemize, our course syllabus

is based on a frequency analysis of a corpus, and our learners have direct

access to corpus information; our learners have numerous and varied

opportunities for rehearsal such as re-encountering words in spoken form,

dictionaries, on-line word-banks, and self-administered and

teacher-administered quizzes; deeper learning is encouraged by having learners

contribute their own words, contexts, and definitions to the course materials,

and providing them with opportunities to meet words in novel contexts through

the concordance and the build-your-own cloze passages. In addition, we took up

Sökmen’s challenge also to look at

the Internet as a source for vocabulary activities, but have changed her also to a through: a corpus approach, at least as we have realized it, is

really only practicable if undertaken in a networked computer context — the

corpus access, collaboration, and general volume of our syllabus all depend on

it.

Yet another challenge we respond to in

Sökmen’s quotation is its date, 1997. As our review of web-learning materials

suggests, since 1997 the overwhelming majority of resources for web-based

vocabulary learning appear to be devoted to meeting infrequent words through

choose-a-synonym activities, with little in the way of initiating text or contexts

present and almost never with easy access to lexical resources such as the

Internet makes possible. We felt it was time to turn prescription into a real

set of materials focused in a course for real learners who face real

consequences in their acquisition of a second language. And yet in doing this,

in evolving the concept, technology, and pedagogy of a corpus-based approach,

we have found some reasons why the timeframe of such realizations is years

rather than months.

The questions we have asked of the approach

are necessarily the early questions. While the ultimate questions about a

corpus approach involve whether students expand their lexicons effectively

while in the course and are set on a path for further independent acquisition

following the course, the immediate questions also involve whether our

corpus-based on-line materials are even usable, and by learners with different

starting points and objectives; whether students can design learning materials

for other students; which learning tools they will choose given alternatives;

and whether they will voluntarily adapt themselves to deeper vocabulary

learning when shallower and easier alternatives are available. We believe the results of our experimentation

so far are positive and augur well for the further development of a

corpus-based approach.

First, the computer-based materials proved

usable and able to handle the volume of vocabulary processing that researchers

have long argued was possible but which we argue is only practical in a networked

context where students share their words and not every instance of processing

must pass through a teacher.

Second, it seems that many of these words, at

least those that pass through the Word Banks and the numerous opportunities for

further processing these provide, are not only processed but also learned, both

receptively and productively.

Third, our process and materials seem not

only usable but also able to be used and shared by learners with fundamentally

different starting points (Romance and Asian backgrounds) and different

objectives (different specialist areas).

Fourth, the learners have shown themselves

able to submit Word Bank entries (interesting words, clear examples, correct

part of speech, suitable creation or selection of definition) that can be used

by other learners (see also Horst & Cobb, 2001). The language of their

example sentences is informative, and there is no tendency to produce example

contexts too short to make any sense of. Further, the learners probably have

the capacity to provide each other with even clearer contexts, as was seen in

the upward movement in contextual support levels when the quiz option was added

to the Word Bank.

Fifth, the learners showed good interest in

deeper processing of new words on at least some occasions. For example, they

could have been content to meet AWL words in word lists and banks, and

self-quizzes which asked them to replace the word in the same context, but

instead they took the trouble to generate novel AWL cloze passages where they would

have to replace AWL words in gaps in texts of their own choosing “fairly

often”.

Yet the deeper processing question remains

far from answered even in the context of our course. We found in the earlier

study that concordancing while not immensely popular appeared to be predictive

of learning. Here, there was less use of concordancing, possibly because the

cloze maker programs may have given some of the same benefits of meeting words

in new contexts but in a more coherent textual scheme. However, there are

benefits to concordancing, such as the number and breadth of contexts for a

given word, and the possibilities of offering it as a help option at an

opportune moment (while working on a cloze passages, for example) that make us

want to continue developing ways to make concordancing more usable.

Our future plans for this course are

threefold:

- Materials. The Word Bank needs to be easier for

teachers to use. The next round of this course will offer a new

teacher-edit function, so that any errors in students' Word Bank entries

can quickly be cleaned up. The resources can also be expanded. Since the

period of this study, a number of new on-line dictionaries have become

available, including excellent advanced learner dictionaries from

Cambridge [6] and Longman [7], and some specialist ones such as Greaves’

bilingualised English-Chinese lexicon [2]. We intend to offer a menu of

such resources. Finally, the search goes on for a good learner corpus to

replace the Brown Corpus we are currently using. In fact, better general

and specialist corpora are needed. In the case of a general corpus, it is

no simple matter to find or develop one large enough to consistently offer

10 or more contextual examples for any of the thousands of middle-to-low

frequency words that an academic learner of English might opt to look

up. In our experience, a corpus

large enough to meet this standard tends to also be full of extraneous

off-list items that make the interpretation of even common words problematic.

Specialist corpora for specific domains of study are less of a problem to

develop, in principle, following a procedure established some years ago in

Sutarsyah, Nation and Kennedy (1994). Yet none have been developed that we

know of even for the most common academic disciplines.

- Learner tracking. We have begun looking at

which resources learners are using (concordances, cloze passages, etc.)

but we need to look more closely, as a step toward tying resource use to

learning outcomes. In the next run of this course, we will track concordance

use specifically. Since concordancing is on offer as a help option in

completing cloze passages and elsewhere in the suite of activities, it may

be getting use that the students do not recall when asked about it

separately on an end-of-course survey. It should be a fairly simple matter

to link use of this and other resources to the IP numbers of learners’

most often used computers and begin to track the sources and resources of

learning.

- Better testing. There appears to be no suitable

standard instrument available for assessing gains in an advanced

vocabulary course. The Vocabulary Levels Test serves well at 2000 and AWL

levels, but the 5000-10,000 level, with 30 test words representing 5000

word families, cannot be used in this way. Students might well learn or

begin to learn scores of new words in this frequency zone without

producing a ripple on such a test. In this study we have experimented with

ways of developing pre-post tests more tied to the words actually

encountered, and shall continue to pursue this avenue. We plan to draw on

techniques piloted in research by Horst (2000) to test changes in levels

of partial vocabulary knowledge; measures that are sensitive to

incremental growth may register acquisition at the level and pace of our

experimental course more clearly.

To wrap up, one corpus approach to on-line vocabulary acquisition has shown itself viable and has passed the first experimental tests. And yet, we feel there is at least as much work in front of us as behind us.

Notes

1. Following guidelines by Bauer and Nation

(1993), a word family is defined as a root word, e.g. produce, and its derived forms, e.g. product, production, unproductive, etc.

2. The assumption that the subjects would be thoroughly acquainted with high frequency English words proved to be somewhat mistaken, especially in the case of learners with Romance language background. As Figure 8 shows, over a quarter of the Romance entries appear on West’s (1953) list of the 2000 most frequent English word families.

Website

addresses

1.

The Compleat Lexical Tutor. http://132.208.224.131/

2.

Virtual Language Centre. http://vlc.polyu.edu.hk/

3.

Sandra Haywood’s Academic Vocabulary site http://www.nottingham.ac.uk/~alzsh3/acvocab/index.htm

4.

Duncan Mason’s Culture Shock page. http://www.faceweb.okanagan.bc.ca/cultureshock/index.html

5.

Gerry’s Vocabulary Database. http://www.cict.co.uk/software/gvd/features.htm

6.

Cambridge Advanced Learners Dictionary. http://dictionary.cambridge.org/

7.

Longman Web Dictionary. http://www.longmanwebdict.com/

References

Bauer,

L. & Nation, P. (1993). Word

families. International Journal of

Lexicography. 6, 253-279.

Beck,

I. L., McKeown, M. G., & McCaslin, E. (1983). Vocabulary development: All contexts are not created equal. Elementary

School Journal, 83, 177-181.

Cobb,

T. (1999). Breadth and depth of

vocabulary acquisition with hands-on concordancing. Computer Assisted Language

Learning, 12, 345-360.

Cobb,

T. (2000). The compleat lexical

tutor [website] . Available:

http://132.208.224.131

Coxhead,

A. (2000). A new academic word

list. TESOL Quarterly, 34, 213-238.

Francis,

W. N., & Kucera, H. (1979). A standard corpus of present-day edited

American English, for use with digital computers. Department of Linguistics, Brown University.

Hazenberg,

S., & Hulstijn, J. (1996). Defining

a minimal receptive second language vocabulary for non-native university

students: An empirical investigation. Applied Linguistics, 17, 145-163.

Horst,

M., & Cobb, T. (2001). Growing

academic vocabulary with a collaborative on-line database. In B. Morrison, D. Gardner, K. Keobke, &

M. Spratt (Eds.), ELT perspectives on IT

& multimedia; Selected papers from the ITMELT Conference 2001 (pp. 189-225). Hong Kong: Hong Kong

Polytechnic University.

Horst,

M. & Meara, P. (1999). Test of a

model for predicting second language lexical growth through reading. Canadian

Modern Language Review, 56, 308-328.

Laufer,

B. (1989). What percentage of lexis is

necessary for comprehension? In C.

Lauren & M. Norman (Eds.), From

humans to thinking machines, pp. 316-323.

Clevedon: Multilingual Matters.

Laufer,

B. (1992). How much lexis is necessary

for reading comprehension? In P.J.L.

Arnaud & H. Bejoint (Eds.), Vocabulary

and applied linguistics (pp. 12-132).

London: Macmillan.

Laufer,

B., & Hulstijn, J. (2001).

Incidental vocabulary acquisition in a second language: The construct of

task-induced involvement. Applied Linguistics, 22, 1-26.

Laufer,

B., & Nation, P. (1995). Vocabulary

size and use: Lexical richness in L2 written production. Applied

Linguistics, 16, 307-322.

Nation,

I (1982). Beginning to learn foreign

vocabulary: A review of the research. RELC Journal, 13(1), 14-36

Nation,

I. S. P. (2001). Learning vocabulary in another language. Cambridge: Cambridge University Press.

Schmitt,

N. (2000). Vocabulary in language teaching.

Cambridge: Cambridge University Press.

Schmitt, N., Schmitt, D., & Clapham, C. (2001). Developing and exploring the behaviour of two new versions of the Vocabulary Levels Test. Language Testing 18, 1.

Sökmen, A. J. (1997). Current trends in teaching second language vocabulary. In N. Schmitt & M. McCarthy, (Eds.), Vocabulary: Description, acquisition and pedagogy (pp. 237-257). Cambridge: Cambridge University Press.

Sutarsyah,

C., Nation, P., & Kennedy, G. (1994).

How useful is EAP vocabulary for ESP? A corpus based study. RELC

Journal, 25, 34-50.

Wesche,

M., & Paribakht, T.S. (1996). Assessing

vocabulary knowledge: Depth versus breadth.

Canadian Modern Language Review,

53, 13-39.

West,

M. (1953). A general service list of English words. London: Longman, Green and Co.

Xue,

G., & Nation, I. S. P. (1984). A

university word list. Language Learning and Communication, 3,

215-229.

Zahar,

R., Cobb, T. & Spada, N. (2001).

Conditions of vocabulary acquisition. Canadian Modern Language Review, 57, 541-572.

Biographical Statements

Marlise Horst is an assistant professor at the TESL Centre of the Department of Education at Concordia University in Montreal. Her research focuses on extensive reading and computer-assisted vocabulary learning.

Ioana Nicolae recently completed an MA in Applied Linguistics at Concordia University. Her thesis research investigated grammatical and semantic aspects of word knowledge in university learners of English.

Tom Cobb is a professor in the département de linguistique et de didactique des langues at Université de Québec à Montreal. He specializes in developing on-line tools for vocabulary learning and research.